Since it was introduced in 2018, Google Search Console’s URL Inspection Tool has been an indispensable aid to webmasters and SEOs, helping them understand the indexation status of their site’s URLs. One handy feature of the URL Inspector is the Live URL Test button, allowing you to test your current version of your page as Google would see it.

But when I used Live Test to test some redirects, I found that it reported a HTTP Status Code 200 instead of a 301 (redirect) which confused me greatly!

Where to find the Live URL Test in the URL Inspection Tool

First let’s cover where to find the URL Inspection Tool. You can find the tool at the top of Google Search Console for every page. Simply enter the URL of your site into the entry box that you are interested in getting more information on.

You can also access the inspector by clicking on the magnifying glass next to a URL, this is available in multiple reports (you may need to mouse over the URL to see the icons).

The information you’ll get back is the state of indexation for that URL at the time when the URL was last crawled, it might look something like this:

Or it might tell you that your URL is NOT indexed and give you a reason why.

To see when the URL was last crawled click on the down arrow on the Page Indexing tile to get more information. You might have changed the URL since it was crawled, so to get feedback from Google on your updated URL, you’ll want to use the Live URL Test. The Live URL Test button is located at the upper right corner of the page.

Using Live URL Test to Test Redirects Didn’t Go So Well

I was helping a client recover from a big drop in organic traffic. I had found that many of their redirects were broken. They had migrated away from a ASP/Net site and many of their older backlinks pointed to the old .aspx URLs that were now returning 404s, leaking valuable link juice into the internet ether.

Their new site was built on Angular which is a JavaScript framework which comes with its own set of SEO challenges that I should cover in another post one day. The dev team had struggled with properly implementing redirects in Angular and unintentionally broken many of the previously implemented redirects. The fact is, SPAs (Single Page Applications) such as Angular don’t always handle redirects well and the best thing to do is to instead configure the web server to handle the redirects in a file such as htaccess.

I created a list, met with the dev team and the 301 redirects were implemented shortly thereafter.

When I was testing the new redirects, as a sanity check I used the URL inspection URL Live Test tool to test the redirecting URLs. Given this was an Angular site, I was trying to be thorough, I wanted to be convinced that Google could see the 301.

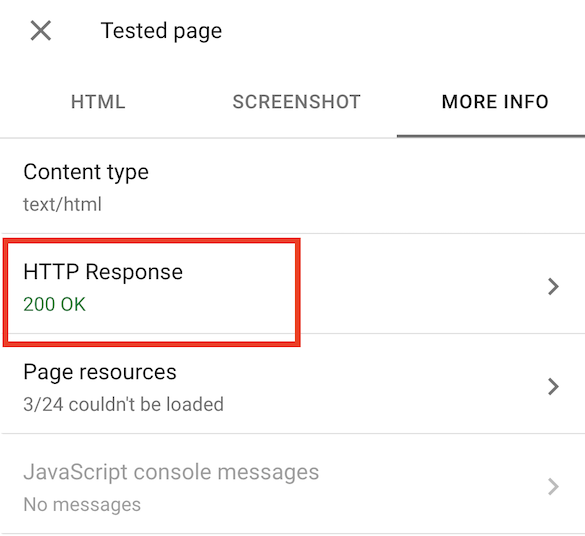

In our case the URL inspector was showing the URL not indexed due to a Not Found (404), see the screenshot above. When I live tested the URL, I was happy to see that the URL was available for indexing. But I wanted to also double check the HTTP Status Code of the tested URL. To see this, you can click on “View Tested Page” and then click on the “More Info” tab when the sidebar on the right appears.

I was surprised to see that the Status Code was 200 rather than 301.

This stopped me cold from moving to the next step which was to click on “Request Indexing” to ask Google to recrawl the URL to see the newly implemented 301 redirect instead of a 404.

Why is GSC Live Test showing a 200 instead of a 301?

I’ve seen cases in the past where a site returned a different status code to Googlebot than to a regular browser. But that was not the situation here, testing with curl (with the -A parameter to spoof a Google User Agent) showed a 301 HTTP Status Code. Additionally, when crawling the old URLs with Screaming Frog, (with the User Agent set to Googlebot-Smartphone) HTTP 301 Status Codes for all the old URLs were reported.

Not understanding at all was going on, I took a look at the server logs and found that the web server was indeed sending a 301 to Googlebot. In fact, all of the Googlebot requests for .aspx URLs were seeing a 301.

At this point I was wondering “Was Live Test in Google Search Console Broken”?

How about this for more confusion. For redirecting pages that show as “Not indexed”, as you would expect, the URL Inspection Tool will show the reason as “Page with redirect”:

But when you Live Test the same URL, you’ll get shown a 200 HTTP Response.

Confusing, right?

True Destination URL

Turns out that GSC Live URL Test was not broken and was working as designed. What is happening is that Google Search Console follows the redirect to the final target URL which in our case does indeed return a HTTP 200.

So to walk through this again (because it is confusing):

- The URL Inspection Tool tells you that your URL is not indexed due to a Redirect.

- But when you Live Test the URL, it silently follows the redirect to the “true destination URL” and reports the HTTP Response from that destination URL (which could be a 200 or something else).

This concept of the “true destination URL” (h/t to Glenn Gabe for this descriptive term in his excellent article on this topic), is pervasive throughout the GSC Pages report, it’s not just in the URL Inspector.

Applies to more than one scenario

True destination URLs can pop up in other scenarios too:

Can’t figure out why GSC is reporting your URL as “blocked by robots.txt” when there is no matching Disallow statement in robots.txt? Maybe the URL is redirecting to a URL that IS blocked by robots.txt.

Once you understand that the reports and the Live URL Test tool are based on the response from the final URL in a redirect chain, everything starts to make a lot more sense.

It does occur to me that this feature in Google Search Console gives us an opportunity to test how many redirects Google will follow but that’s a project for another day.